ECS Parameter Store Synchroniser

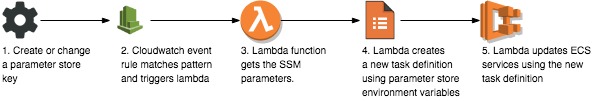

Working with credentials within ECS and passing them around is not entirely straighforward. As one way of doing this, this solution bases all environment variable storage in the AWS Parameter Store, then automatically synchronises them with the running tasks in a set of specified ECS clusters and tasks.

This solution uses a Cloudwatch Event triggered Lambda function on EC2 Parameter Store operations to update the environment variables on specified tasks and ECS clusters.

Although the template here is a basic example of this approach, by using a defined parameter store key layout, granular access on both key hierarchies and ECS clusters, a decent key store for ECS fully managed by AWS can be run in production systems.

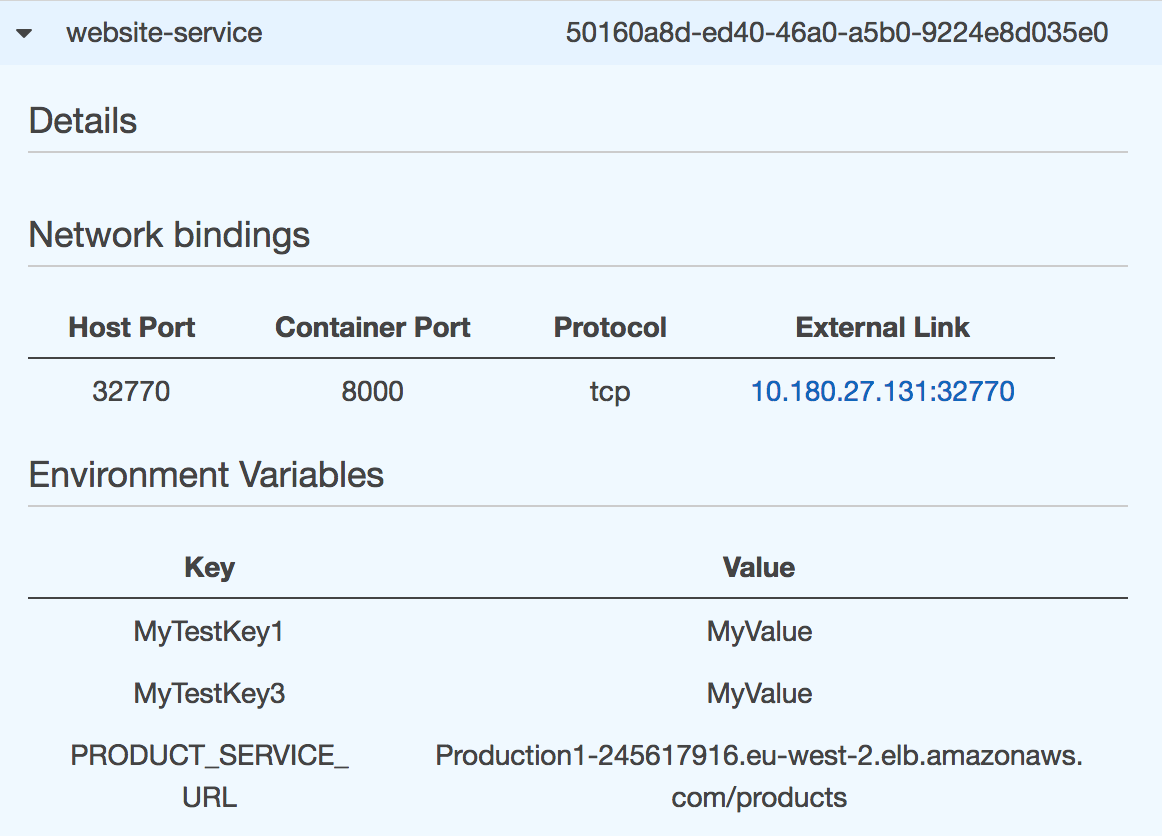

As with all containerised environment variable passing approaches, with the benefit of simplicity and a secrets backend agnostic approach comes the risks of placing secrets in environment variables in containers. In this case they will be exposed in the ECS console, and obviously on-instance if ssh access has been enabled.

Running the example

Create a demo cluster

As our example cluster we’ll use the the AWS ECS Refarch,

Setting up the lambda function

Upload the zipped lambda code ecs-parameter-store-sync.zip to a bucket in your own account, in the same region as the stack will be deployed.

The lambda function role needs access to both AWS SSM and ECS, in this template the permissions are broad for simplicity but could be easily made more specific.

ecs.*

ssm.*

We also need to set the environment variables to specifiy which clusters and tasks to target, along with the base string for all our parameter store keys.

CLUSTER: ecs-example # A comma-seperated list of clusters to target

TASKS: test-task # A comma-seperated list of tasks to update envs in.

KEYBASES: /environment/dev/ # The base of the keys in parameter store to apply

Creating the stack

Set the parameters, if your using the demo ecs refarch defaults, they will look like below,

CLUSTERS=Production

TASKS=website-service

KEYBASE=/environment/dev

Plus, the bucket where you stored the lambda,

LAMBDA_BUCKET=<bucket with ecs-parameter-store-sync.zip>

Updating a parameter store key

With our key hierarchy in the form /environment/dev/* we can update keys by

running,

aws ssm put-parameter \

--name "/environment/dev/MyTestKey1" \

--value "MyValue" --type String

The changes should be reflected in the ECS running task,

EC2 Parameter store

Put a test parameter into the store,

aws ssm put-parameter \

--type String \

--name "/environment/dev/MyTestKey1" \

--value "MyValue"

aws ssm describe-parameters

{

"Parameters": [

{

"LastModifiedUser": "arn:aws:iam::xxxxx:user/Me",

"LastModifiedDate": 1502220319.566,

"Type": "String",

"Name": "/environment/dev/MyTestKey2"

}

]

}

You should be able to see the Cloudwatch event triggered and the Lambda logs filtering and updating services. Finally, once the new task definition has been deployed,

Notes

Currently missing features or integration requirements for production ECS,

- Parameter store encrypted values support

- Triggered update in deploy pipeline

- Graceful handling of multiple changes

- Nice serverless build and deploy

- Wildcard matching on event patterns, allowing no trigger unless keys match